Collaborative Intelligence: Agentic Teamwork

There is a moment in every great jazz improvisation where the individual musicians stop playing at each other and begin playing through each other. Coltrane called it “sheets of sound.” Miles simply called it listening. I’ve been thinking about this phenomenon lately, not in a smoky club, but in the sterile glow of my development environment, watching my AI agents attempt something remarkably similar.

Clearly, we have entered the age of agentic AI. But like most revolutions, it arrived while we were arguing about something else — usually whether a single model could pass the bar exam. We missed the profound shift: AI is becoming a team sport.

The Solo Virtuoso Fallacy

In my previous wanderings through non-ergodic territory, I explored how averages lie to us. The same logic haunts our current AI architecture. We are suffering from the Solo Virtuoso Fallacy: the belief that if we just make the model big enough, it will be good at everything.

But cognition, like markets, isn’t ergodic. A single agent, no matter how vast its context window, is trapped in its own statistical average. It hallucinates because it has no one to check its work. It spirals into reasoning loops because it lacks an external disruptor.

To get better results, we don’t need a smarter god-model. We need a smarter committee. We need Collaborative Intelligence.

The Architecture of Artificial Argument

The breakthrough papers from 2023 and 2024—from Microsoft’s work on AutoGen to the Multi-Agent Debate frameworks—demonstrate what urban planners learned decades ago: specialization creates resilience.

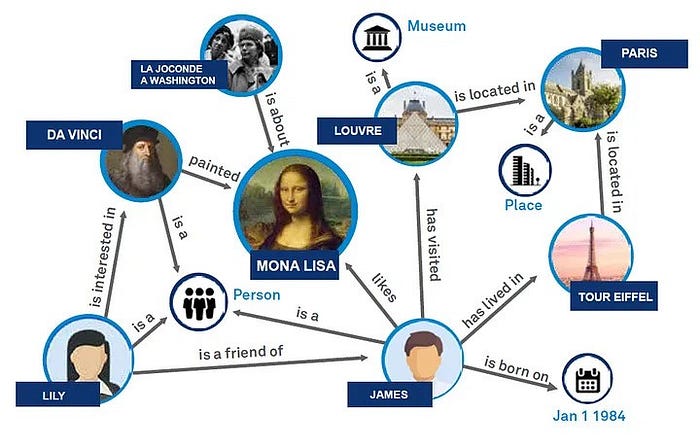

The emerging topology of these systems is fascinating. It’s not a pipeline; it’s a graph.

Figure 1: The shift from linear prompting to conversational graphs. (Source: Microsoft AutoGen)

When we architect these systems effectively, we are usually deploying three specific patterns:

1. The Choreography of Conflict (Adversarial Collaboration)

The most effective multi-agent architectures embrace something deeply counterintuitive: structured disagreement.

In a pattern often called “Multi-Persona Self-Correction,” we instantiate agents with opposing goals.

- The Architect proposes a solution.

- The Critic ruthlessly tears it apart, looking for security flaws or hallucinations.

- The Synthesizer mediates the conflict.

This isn’t mere devil’s advocacy. It’s recognition that single-perspective reasoning falls prey to coherence-seeking. As noted in recent research on “ChatDev” and MIT’s consensus papers, the friction is the feature. The system becomes antifragile because the internal conflict pre-empts the external failure.

2. Feudalism and Specialization (Role-Based Agents)

Frameworks like CrewAI have popularized a pattern I reluctantly admire: feudal agent hierarchies. You define a “crew” with roles—Researcher, Writer, Reviewer—each with its own prompt-primed persona.

A “Senior Research Analyst” agent doesn’t just search for information; it evaluates sources with the skepticism that the role implies. The insight here is almost theological: We are what we pretend to be, even in silicon.

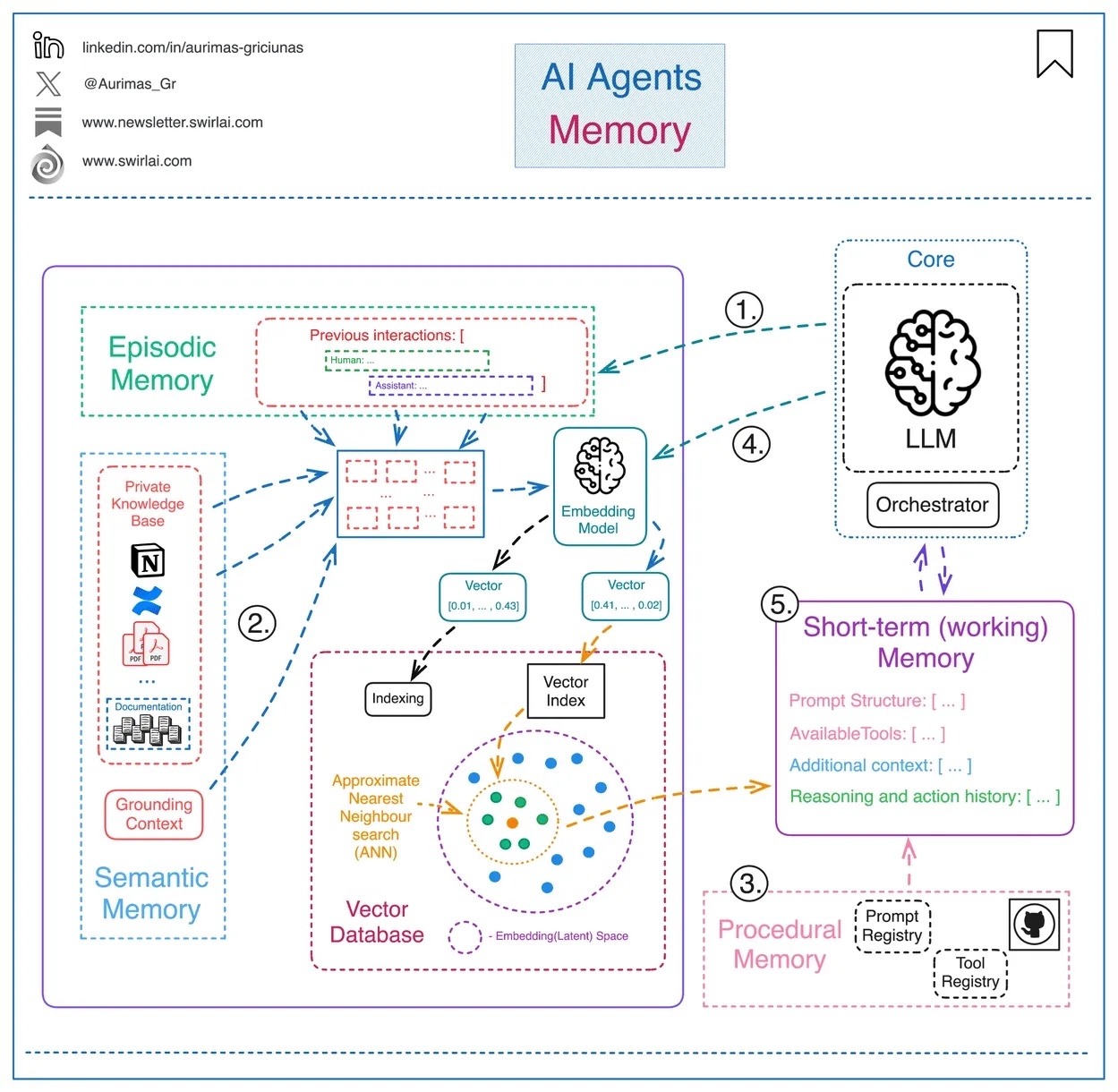

3. The Context Bus (Shared State)

Here is where the engineering gets hard. If Agent A learns a critical fact, Agent B needs to know it instantly without re-reading the entire conversation history.

We are seeing architectures evolve toward semantic shared memory—implemented beautifully in tools like LangGraph. Think of it as a “Context Bus” or a collective wiki that all agents subscribe to. It is the difference between a group of people shouting in a room and a group of people editing a shared document.

Figure 2: Agentic Memory in multi-agent systems

Failure Modes: The Abilene Paradox in Silicon

However, as a systems thinker, I have to ask: what happens when the committee goes crazy?

I’ve cataloged a specific failure mode in these swarms: The Multi-Agent Abilene Paradox. In organizational theory, this is where a group of people collectively agree on a course of action that none of them individually wants, because they each assume the others want it (the Abilene Paradox).

Agents do this too. They can reinforce each other’s hallucinations, creating an echo chamber of error. To mitigate this, we need deterministic guardrails injected into the probabilistic mix. We need “Tool-Use” agents that don’t just “think,” but verify against trusted external APIs (calculators, compilers, databases).

Toward Contemplative Engineering

We stand at a peculiar moment. We are building systems sophisticated enough to collaborate, but not yet wise enough to collaborate well.

What I find myself advocating for is contemplative engineering. It is the discipline of pausing before implementation to ask:

- What perspectives are absent from this agent ensemble?

- Where have we encoded our assumptions invisibly?

- Who suffers if this system fails?

The future isn’t a smarter chatbot. It’s a smarter team. And for the first time in history, you get to be the coach of a roster that never sleeps, arguing with itself in the dark, trying to find the truth in the noise.

Meet me on the corner of State and Non-Ergodic. Bring your agents.

Leave a Reply